...

| Page properties | ||||

|---|---|---|---|---|

| ||||

|

This article is intended to guide an instructor in locating and utilizing the student test reports generated by Respondus Monitor. For an overview of the functions of Respondus Monitor and its partner tool Respondus Lockdown Browser, click here. For guidance on enabling Respondus Monitor, click here.

To locate the reports, open the Respondus Lockdown Browser tool in scholar and navigate back to that list of tests.

Once students have taken the test with the Monitor activated, the dropdown associated with the test should includes an option to view class results.

This will bring you to a list of all the test attempts that have been taken so far sorted by student, along with key information about that attempt. One of the most important pieces of information in this is the level of review priority that should be given to the attempt--high, medium, or low. These levels give you a general idea of the number of red-flagged events a student’s test taking session has.

Clicking the plus icon next to the student name will open the report, including the full video recording of the student’s test taking. If a student has recorded multiple sessions, this will also allow you to select the report you want to review.

...

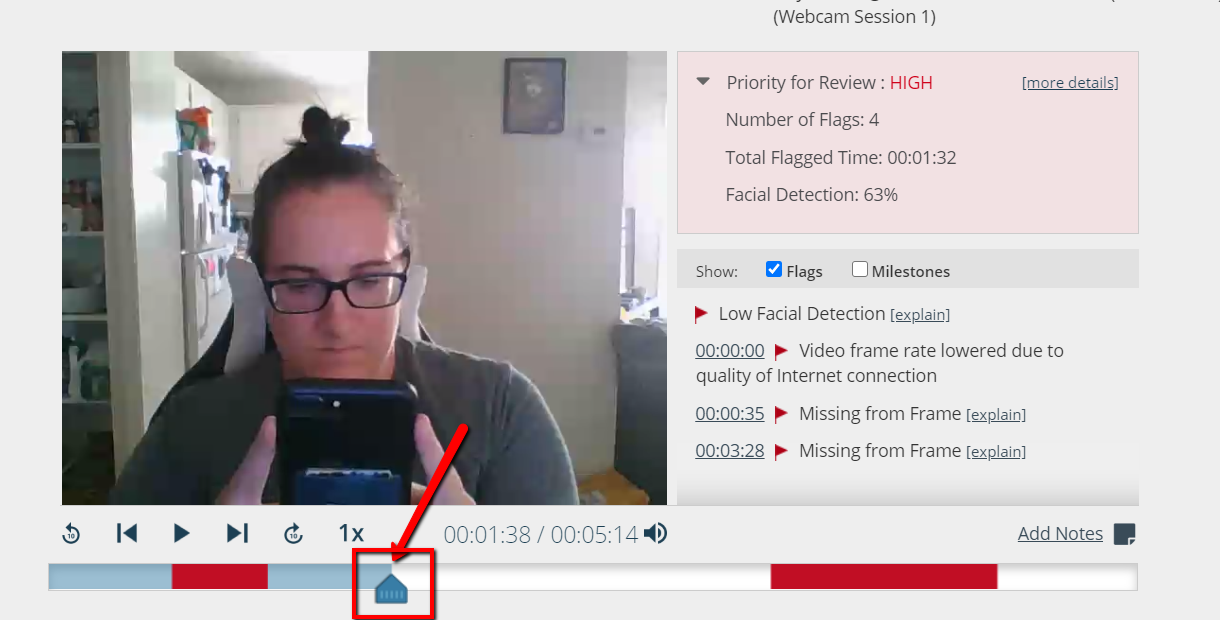

Moments of the recording that have been tagged as possible red flags will be clearly marked. Instructors can play through the entire test-taking session , or use the time markers to jump to those red flags. You will also have a window that counts the total red flags marked, the total amount of time in the recording that has been flagged, and the overall accuracy of the tool's facial detection.

The time markers also come with the option to turn on Milestones, which keep track of when each question in the exam was answered. Turning on Milestones alongside Flags can help show possible connections between the two; for example, if a student has a long flagged period, only to answer a difficult question on your exam immediately after.

...

At this point, it is important to emphasize that these red flags are generated by an automated algorithm. It is entirely possible for a red flag to be a false positive if the activity looks suspicious, but ends up innocent--for example, if the student has to leave the computer during an exam due to a sudden emergency at home. Likewise, it is entirely possible for this algorithm to produce a false negative: for example, in the below image, the student in the video is openly looking at their cell phone in view of the webcam, but the web camera doesn’t flag it as something to review.

To prevent false flags, it is recommended that instructors check all student reports at least briefly, focusing on high-priority reports or students they believe are high-risk, rather than assuming that the level of priority is concrete proof of student misbehavior. To help an instructor save time in this process, it is possible to play through the video on fast-forward, rather than in real-time .